Big Data - Brains vs. Hype

12 November 2012

This past week was PASS Summit 2012, the annual gathering of database nerds professionals to learn about database management, business intelligence, and upcoming trends. A common theme this year was big data.

Few people could agree on a definition or the implications of _big data; _there is a lot of FUD going around. This is tragically ironic because big data tools and techniques arose as a _backlash _to misinformation and silos. The original MapReduce paper (Dean, 2004), describes why it was built: a way for more Google employees to do more analysis on massive data sets, to help Google build better products.

The goal: Make data driven decisions.

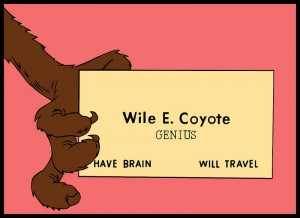

It’s about Brains, Stupid

Data has no value on its own. Storing all your data in Hadoop gives you nothing but a very big bill.

The goal isn’t big data. The goal is better decisions.

To have better decisions, you need data and brains. The benefit comes when talented people analyze data, and use that analysis to make things better. Great tools are not a panacea.

Here is a handy guide to help you decide whether your organization can build, use, and benefit from a big data project:

| Question | Yes | No |

|---|---|---|

| Does your organization make data-driven decisions more than 50% of the time? | +100 | -500 |

| Does your organization currently make data-driven decisions more than 80% of the time? | +200 | -100 |

| Do you have people with statistical and programming skill to analyze data well? | +200 | -200 |

| Do you currently let projects fail, and learn from the failure? | +75 | -150 |

| Are your colleagues curious and open-minded? | +50 | -200 |

| Do you use your existing data as much as possible? | +50 | -50 |

| Think of a relevant data set to your business. Can you brainstorm at least 10 uses for it? | +50 | -100 |

| Do you understand the design and use of ‘big data’ tools enough to identify marketing vs. reality? | +25 | -400 |

Add up all of your scores:

- 300+: Your team and culture can make things happen. Go forth and be awesome.

- 200 to 300: You can probably benefit from a big data project, but be sure to address gaps.

- 100 to 200: You’ll benefit more from helping your organization become more data driven, curious, and honest than by any magic project or product.

- Less than 0: Watch out for pointy-haired bosses and dysfunctional office politics.

Big Data is valuable only within certain business problems, organizational cultures, and with the certain types of people involved. It is not a panacea.

The real panacea, as always, is having smart people, a curious/honest organizational culture, and a collective desire to do amazing things.

PermalinkPASS Summit - A Veteran's Guide

02 November 2012

There have been some excellent posts about SQL PASS Summit: more advice than you can shake a stick at. I’m going to focus on 3 topics: picking sessions, endurance, and follow up.

Picking Sessions

This will be my 5th PASS Summit. I have experimented with different approaches to picking sessions, and come up with a guide. Time is valuable.

- Go to Dr. David DeWitt’s keynote/session. He is an intellectual powerhouse, and never to be missed.

- Narrow down your choices to the better presenters; don’t filter by subject yet. A good presenter can turn a topic from dry to riveting. A mediocre presenter does the reverse.

- Pick a mix of relevant topics. As a database developer, I choose a mix of performance tuning, T-SQL tips and tricks, architecture discussions, and professional development. Again, only consider the best presenters.

- Don’t judge a session by its level. 500-level sessions are always advanced; everything else depends on the presenter.

- Pick the session where you will ask more questions.

- Lightning talks are great. Go to them if nothing else awesome is happening.

- If nothing fits for a given time slot, take a break. Write down your notes, go for a walk, let your batteries recharge. Be social.

- The content can be hit-or-miss for Microsoft execs.

- After each session, take notes on whether you like the topic and presenter. Use that for future reference.

Endurance

The week of PASS is grueling: 18-hour days of mental and social stimulation are common.

- Don’t expect to remember what you’ve seen in a session. Write it down for later.

- Drink lots of liquid. I have a cup of coffee, a bottle of water before lunch, water with lunch, 2 bottles of water in the afternoon, and a beer with dinner.

- Eat lightly at lunch. Otherwise you will be sleepy in the afternoon.

- Get the session recordings. There are always conflicts where two great sessions are happening at the same time. The recordings are great for this. They’re also a great deal.

- Use paper. Paper never runs out of batteries, is lighter than anything electronic, and is amazingly versatile when you want to draw diagrams.

- Chat with 2-3 new people each session. Learn a bit about what they’re doing, their expertise and challenges. Write this down on their business card. By the end of PASS you’ll have met a couple dozen people.

- Wisdom often sounds simplistic, obvious, or old. Don’t dismiss an idea or technique for those reasons; the best ideas are simple and obvious in hindsight.

- Drink in extreme moderation. Few things are more pointless than attending a session with a hangover.

- There is always something happening in the evening. Check Twitter to find out what. Barring that, go to Tap House; it’s the de-facto watering hole.

Follow Up

The week of PASS Summit is too much for anyone to absorb fully. So, don’t expect to. A lot can be done in the following weeks.

- Go over your notes. Try out what you’ve learned. Doing something the next step in learning after hearing about it.

- Follow up with people you’ve met. If you know of a blog post or contact particularly relevant to their job, role, or challenge, share your knowledge.

- Watch the sessions with your team. Lunch brownbags are great for this. Bring popcorn.

I hope to see you at PASS.

Permalink